Explore HTML treeĪs you can observe, this tree contains many tags, which contain different types of information.

#TOWEB TUTORIAL CODE#

If you print the object, you’ll see all the HTML code of the web page. soup = BeautifulSoup(req.text,"html.parser") In this way, we transform the HTML code into a tree of Python objects, as I showed before in the illustration. Let’s create the Beautiful Soup object, which parses the document using the HTML parser. In this line of code, it’s like when we type the link on the address bar, the browser transmits the request to the server and then the server performs the requested action after it looked at the request. The client communicates with the server using a HyperText Transfer Protocol(HTTP).

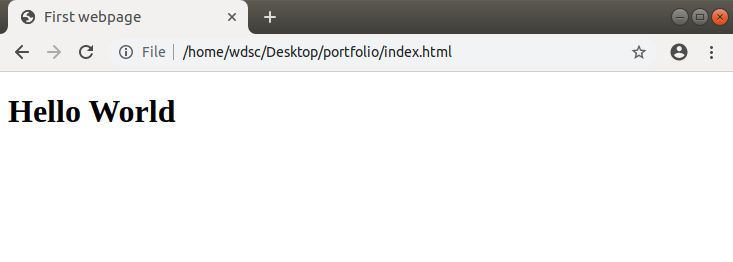

This operation can seem mysterious, but with a simple image, I show how it works. To get the web page, the first step is to create a response object, passing the URL to the get method. Since the HTML has a tree structure, there are also ancestors, descendants, parents, children and siblings. I will show an example of HTML code to make you grasp this concept. Indeed, an HTML document is composed of a tree of tags. A sort of parse tree is built for the parsed page. Let’s import: from bs4 import BeautifulSoupīeautiful Soup is a library useful to extract data from HTML and XML files. Once we terminated to look, we need to import the libraries:

#TOWEB TUTORIAL INSTALL#

The first step of the tutorial is to check if all the required libraries are installed: !pip install beautifulsoup4 The list of countries by greenhouse gas emissions will be extracted from Wikipedia as in the previous tutorials of the series. You only have to add ‘/robots.txt’ at the end of the URL to check the sections of the website allowed/not allowed.Īs an example, I am going to parse a web page using two Python libraries, Requests and Beautiful Soup. I recommend you first look at the robots.txt file to avoid legal implications. You surely aren’t allowed to scrape data from all the websites. For example, if you want to predict the Amazon product review’s ratings, you could be interested in gathering information about that product on the official website. Web scraping is the process of collecting data from the web page and store it in a structured format, such as a CSV file.